The Pinnacle Architecture: RSA-2048 Breakable with 100K Qubits — What This Means for Bitcoin

On February 12, 2026, researchers Paul Webster, Lucas Berent, Omprakash Chandra, Evan T. Hockings, Nouédyn Baspin, Felix Thomsen, Samuel C. Smith, and Lawrence Z. Cohen at Iceberg Quantum (Sydney, Australia) published a paper introducing the Pinnacle Architecture — a fault-tolerant quantum computing design that fundamentally reduces the physical qubit cost of utility-scale quantum computation.

The headline finding: RSA-2048 can be factored with fewer than 100,000 physical qubits using quantum low-density parity check (QLDPC) codes, an order of magnitude reduction from the previous best estimate of ~900,000 qubits.

Full paper: arXiv:2602.11457

Prior to this work, the best published estimate for breaking RSA-2048 was approximately 900,000 physical qubits, established by Craig Gidney in 2025 using optimized surface codes with improved magic state distillation. That estimate itself was a significant reduction from the ~20 million qubit figure from Gidney & Ekå (2019).

The Pinnacle Architecture reduces the requirement by another order of magnitude — to fewer than 100,000 physical qubits. This matters because:

• Multiple hardware vendors have roadmaps targeting 100K+ qubits within 3–5 years. IBM's Quantum Development Roadmap, Google's Willow architecture, Atom Computing's neutral atom arrays, and QuEra's fault-tolerant plans all converge on this scale.

• The gap between laboratory and cryptographic relevance has collapsed. Breaking RSA-2048 was once projected to require millions of qubits — a figure so large it pushed the threat into a distant, abstract future. At 100,000 qubits, it sits squarely within the engineering trajectory of current hardware programs.

• This paper moves “utility-scale” quantum computing from a distant milestone to a near-term engineering target. The question is no longer whether the algorithm exists (Shor's algorithm has existed since 1994), but whether the machine can be built at sufficient scale. The Pinnacle Architecture demonstrates that the scale required is far smaller than previously understood.

Quantum computers are inherently noisy. Every physical qubit is subject to decoherence, gate errors, and measurement errors. To perform reliable computation, quantum error correction is required — encoding logical qubits (the qubits your algorithm uses) into many physical qubits (the hardware qubits on the chip).

The dominant approach to quantum error correction has been surface codes. Surface codes are attractive because they require only nearest-neighbor connectivity on a 2D grid — a layout that maps naturally onto superconducting qubit hardware. However, they are extremely expensive in qubit overhead:

• A single logical qubit in a surface code requires hundreds to thousands of physical qubits, depending on the target error rate.

• Shor's algorithm for RSA-2048 factoring requires roughly 2,048 logical qubits for the modular exponentiation register alone, plus ancilla qubits for arithmetic and error correction.

• When you multiply the logical qubit count by the surface code encoding ratio, you arrive at estimates of ~1 million or more physical qubits — a number that has defined the “quantum threat timeline” for the past decade.

The bottleneck is not Shor's algorithm. The algorithm is well understood and efficient. The bottleneck is the error correction overhead — the multiplicative cost of encoding each logical qubit into physical hardware.

Quantum Low-Density Parity Check (QLDPC) codes are an alternative family of error-correcting codes that achieve fundamentally better encoding rates than surface codes.

• Higher encoding rate: QLDPC codes encode more logical qubits per physical qubit. Where a surface code might require 1,000 physical qubits per logical qubit, a QLDPC code can achieve the same error suppression with a fraction of that overhead.

• Same error suppression: QLDPC codes can achieve the same target logical error rate as surface codes, just with fewer physical qubits. The error suppression is not compromised — it is achieved more efficiently.

• Historical trade-off: The reason QLDPC codes were not previously used in quantum computing architecture proposals is that they are harder to implement in hardware. Unlike surface codes (which require only nearest-neighbor qubit interactions on a 2D grid), QLDPC codes require more complex connectivity patterns between qubits. Additionally, performing logical gates on QLDPC-encoded qubits was an unsolved problem until recently.

The Pinnacle Architecture solves both of these problems: it provides a concrete hardware layout with only quasi-local connectivity (not all-to-all), and it introduces new techniques for performing arbitrary logical operations on QLDPC-encoded qubits efficiently.

The architecture consists of three core components:

1. Processing Units

Processing units are built from bridged QLDPC code blocks equipped with measurement gadget systems. Each processing unit can perform arbitrary logical Pauli product measurements per logical cycle. This is the computational workhorse of the architecture — it executes the logical gates of Shor's algorithm (or any other quantum algorithm) by performing sequences of Pauli measurements.

The key innovation is that these measurements are performed directly on QLDPC-encoded qubits, without the need to decode and re-encode between operations. This eliminates a major source of overhead in previous QLDPC proposals.

2. Magic Engines

Magic state distillation is the process of creating high-fidelity “magic states” — special quantum states required for universal quantum computation. Without magic states, a quantum computer is limited to Clifford operations (which are classically simulable). Non-Clifford gates (specifically T-gates) require magic states, and producing them is one of the most resource-intensive parts of fault-tolerant quantum computing.

The Pinnacle Architecture introduces magic engines: QLDPC code blocks that simultaneously handle magic state distillation and injection. Each magic engine provides one high-fidelity magic state per logical cycle per processing unit. This is a critical efficiency gain — in surface code architectures, magic state factories consume a large fraction of the total qubit budget.

3. Memory

Optional low-overhead quantum storage accessible by processing units via ports. Memory blocks use QLDPC codes with even higher encoding rates, providing efficient storage for intermediate quantum states during long computations.

Additional innovations:

• Clifford frame cleaning: A new technique for efficient parallelism that tracks and corrects accumulated Clifford frame errors without requiring additional physical operations. This reduces the overhead of parallel logical operations.

• Modular structure: The architecture requires only quasi-local connectivity — qubits need to interact with nearby qubits, not with every other qubit on the chip. This is critical for hardware feasibility, as all-to-all connectivity is impractical at scale.

• Compilation via Pauli-based computation: The architecture compiles quantum algorithms into sequences of Pauli product measurements, which can be executed natively by the processing units. This eliminates the need for complex gate decomposition and scheduling.

The paper benchmarks the Pinnacle Architecture against the problem of factoring RSA-2048 using Shor's algorithm:

Key parameters and results:

• Physical error rate: 10−3 (one error per 1,000 gate operations)

• Code cycle time: 1 microsecond

• Reaction time: 10 microseconds (classical control electronics latency)

• Result: < 100,000 physical qubits with 1 processing unit

• Runtime: approximately 1 month with a single processing unit

• Scaled configuration: With 81 processing units (~1 million physical qubits), the system can factor RSA-2048 at a physical error rate of 10−4 with tc = 1 ms in approximately 3 months

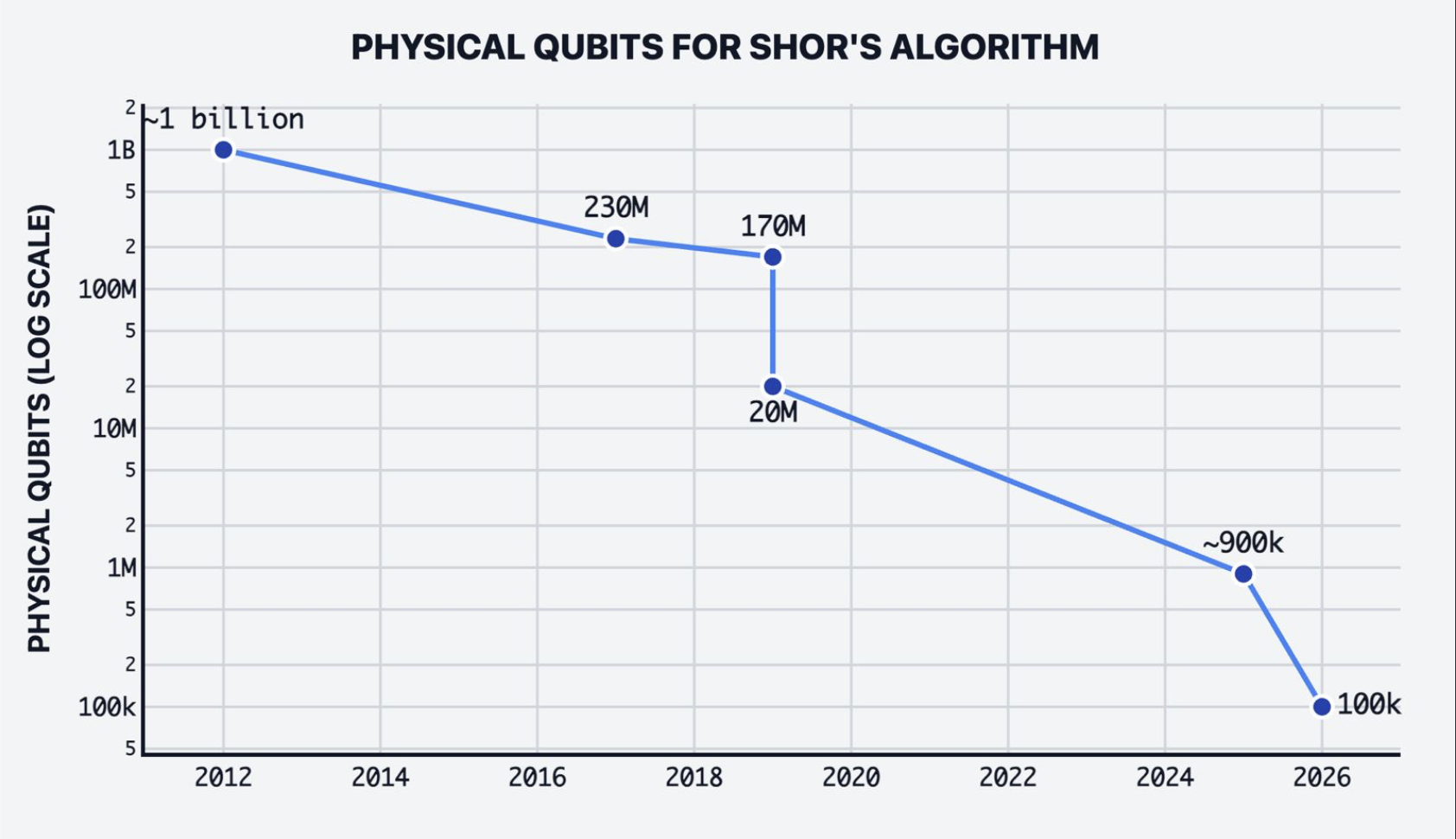

The cost of breaking RSA-2048 has dropped four orders of magnitude in 14 years. From ~1 billion physical qubits (2012) to 100,000 (2026).

The following table shows the progression of estimated physical qubit requirements for factoring RSA-2048:

| Year | Estimate | Physical Qubits | Method |

|---|---|---|---|

| 2012 | Van Meter et al. | ~1 billion | Surface codes |

| 2017 | Roetteler et al. | ~230 million | Optimized circuits |

| 2019 | Gidney & Ekå | ~170 million | Surface codes + windowed arithmetic |

| 2019 | Gidney & Ekå | ~20 million | Optimized surface codes |

| 2025 | Gidney | ~900,000 | Surface codes + improved distillation |

| 2026 | Webster et al. | ~100,000 | QLDPC (Pinnacle Architecture) |

The Pinnacle Architecture is not limited to cryptographic applications. The paper also benchmarks the architecture against the Fermi-Hubbard model — a fundamental problem in condensed matter physics and materials science.

Computing the ground state energy of the Fermi-Hubbard model is a key challenge in quantum chemistry and materials design. With surface codes, this task requires hundreds of thousands of physical qubits. The Pinnacle Architecture achieves the same computation with tens of thousands of physical qubits — again, an order-of-magnitude improvement.

This is significant because it demonstrates that the architecture is general-purpose, not optimized solely for cryptanalysis. The same QLDPC-based fault-tolerant framework that reduces the cost of breaking RSA also reduces the cost of solving industrially relevant quantum chemistry problems. The implications extend to drug discovery, materials engineering, and optimization — any domain where quantum advantage is expected.

The paper’s results depend on the following hardware assumptions:

• Physical error rate of 10−3: One error per 1,000 gate operations. This has already been demonstrated by Google (Willow), IBM (Heron), and other hardware vendors. It is not a speculative parameter — it is the current state of the art.

• Code cycle time of 1 microsecond: The time for one round of error correction syndrome extraction. This is achievable with superconducting qubits, which operate at nanosecond gate speeds with microsecond measurement and reset times.

• Reaction time of 10 microseconds: The latency of the classical control electronics that process syndrome measurements and determine corrections. Modern FPGA-based control systems can achieve this.

• Quasi-local connectivity: The architecture does not require all-to-all qubit connectivity. Qubits only need to interact with nearby qubits in a structured layout. This is critical for hardware feasibility — all-to-all connectivity is impractical at scale for any qubit technology.

The architecture is modular: additional processing units can be added to reduce runtime at the cost of more total physical qubits. This is a space-time tradeoff — a single processing unit (~100K qubits) can factor RSA-2048 in ~1 month, while 81 processing units (~1M qubits) can do it in ~3 months at more conservative error rate and timing parameters. The modular design means the architecture scales with hardware availability.

This is where the Pinnacle Architecture connects directly to QSHA256’s mission.

RSA-2048 is harder to break than ECDSA-256 (secp256k1). RSA-2048 factoring requires more logical qubits than solving the elliptic curve discrete logarithm problem (ECDLP) for Bitcoin’s secp256k1 curve. Shor’s algorithm for ECDSA requires fewer logical qubits than for RSA factoring of equivalent security level. If RSA-2048 falls at 100,000 physical qubits, ECDSA-256 is even more vulnerable.

The numbers are stark:

• QSHA256 tracks approximately 6.9 million BTC with exposed public keys that are at risk from quantum attack.

• This exposure spans 7 address types: P2PK, P2PKH, P2WPKH, P2SH, P2WSH, P2TR, and P2MS.

• P2PK outputs are at highest risk — the public key is stored directly in the transaction output script. No quantum computer is needed to find the key; it is already visible on-chain. A quantum computer is only needed to derive the corresponding private key from that public key.

• P2TR (Taproot) outputs encode the 32-byte x-only public key directly in the address itself, making it trivially reconstructable.

• Address-reuse outputs (P2PKH, P2WPKH) expose the public key when a transaction is spent from the address. If the address is reused and still holds funds, those funds are vulnerable.

Timeline pressure: If 100,000-qubit machines arrive by 2028–2030 (per current vendor roadmaps), Bitcoin has limited time to complete a post-quantum migration. The BIP-360 and BIP-361 proposals provide a migration path in draft form, but require years of implementation, testing, peer review, and community consensus before deployment.

See our analysis: Bitcoin’s Post-Quantum Migration Path: BIP-360 & BIP-361

Explore the live threat data: ECDSA Signatures vs SHA-256 Hashing

Q-Day — the day a quantum computer can break current cryptographic standards — just got closer.

The Pinnacle Architecture does not build the machine. It does not claim that RSA-2048 has been broken. What it does is prove that the architectural blueprint for breaking RSA-2048 exists at a feasible physical scale. The remaining challenges are engineering (building 100K+ qubit machines with sustained low error rates), not theoretical.

This distinction matters because engineering challenges have timelines. Theoretical barriers do not. Once the architecture is proven feasible, the question becomes “when” not “if.”

“Harvest now, decrypt later” attacks add urgency. Nation-state adversaries and sophisticated threat actors are already collecting encrypted data and blockchain transactions today, with the intent of decrypting them when quantum computers become available. Data encrypted with RSA today, and Bitcoin transactions with exposed ECDSA public keys, are being harvested for future quantum cryptanalysis. The Pinnacle Architecture compresses the timeline for when that harvested data becomes vulnerable.

Every Bitcoin transaction that exposes a public key — whether through P2PK outputs, address reuse, or Taproot key-path spends — adds to the corpus of material available for future quantum attack. The clock is not running from the moment a quantum computer is built; it is running from the moment the data is exposed.

The Pinnacle Architecture represents a paradigm shift in fault-tolerant quantum computing. By replacing surface codes with QLDPC codes and introducing efficient magic state engines, it reduces the cost of utility-scale quantum computation by an order of magnitude.

For Bitcoin and the broader cryptographic ecosystem, this paper compresses the timeline for quantum threats from “decades away” to “years away.” The question is no longer whether quantum computers will break current cryptography, but whether the migration to post-quantum standards will be completed in time.

The path forward requires:

• Accelerated adoption of post-quantum cryptographic standards — NIST has already standardized ML-KEM, ML-DSA, and SLH-DSA. Integration into production systems must begin now.

• Bitcoin-specific migration — BIP-360 and BIP-361 provide the architectural framework. Implementation, testing, and consensus activation must be prioritized before the 100K-qubit threshold is crossed.

• Continuous monitoring — Tools like QSHA256 provide real-time visibility into the scale of quantum-vulnerable assets on the Bitcoin blockchain. This data is essential for risk assessment and prioritization.

The Pinnacle Architecture is a milestone, not a destination. It tells us the destination is closer than we thought.

Explore the live threat comparison at ECDSA Signatures vs SHA-256 Hashing. Compare post-quantum signature sizes at Size Matters. Track real-time quantum exposure data at Quantum Vulnerability Agent. Read about Bitcoin's migration path at BIP-360 & BIP-361 Analysis.

• Authors: Paul Webster, Lucas Berent, Omprakash Chandra, Evan T. Hockings, Nouédyn Baspin, Felix Thomsen, Samuel C. Smith, Lawrence Z. Cohen — Iceberg Quantum, Sydney